Automatic Product Tagging in E-Commerce: A Complete Guide

TL;DR

Automated product tagging uses AI to assign attributes — colour, size, material, category, intent — to products at scale, replacing slow, inconsistent manual processes.Done well, it lifts search relevance, reduces zero-result queries, improves recommendation accuracy, and cuts catalogue onboarding time significantly.Done poorly — or without clean training data — it amplifies inconsistency rather than fixing it.

This guide covers what automated tagging is, why it matters, where it breaks, and how to build a program that produces consistent, catalogue-ready tags at scale.

Introduction

A shopper visits your site, types 'metallic purple leather mules' into the search bar, and finds nothing. Not because you don't sell them. Because the product is tagged 'shoes' and the description stops there. They leave, find the product on a competitor's site in under 30 seconds, and you've lost the sale to a tagging gap.

This happens more than most e-commerce teams realize. Baymard Institute research puts 41% of e-commerce sites below acceptable search performance.

Three out of four shoppers abandon a purchase after a failed search, and 48% go straight to a competitor. The root cause, more often than not, isn't the search engine. It's the product data the search engine has to work with.

Automated product tagging is how catalog teams fix this at scale, assigning structured, consistent attributes to every SKU without relying on manual entry that breaks under volume. But automation isn't a plug-and-play fix.

The quality of the tags it produces depends entirely on the quality of the training data behind it.

Here's what this guide covers:

- What automated product tagging is,

- Why it matters for search and sales,

- Where manual processes break, and

- How to build a tagging program that actually scales.

Before automated tagging can fix search and navigation issues, you need to understand the role data labeling plays in making product data usable for AI systems.

What is Automated Product Tagging?

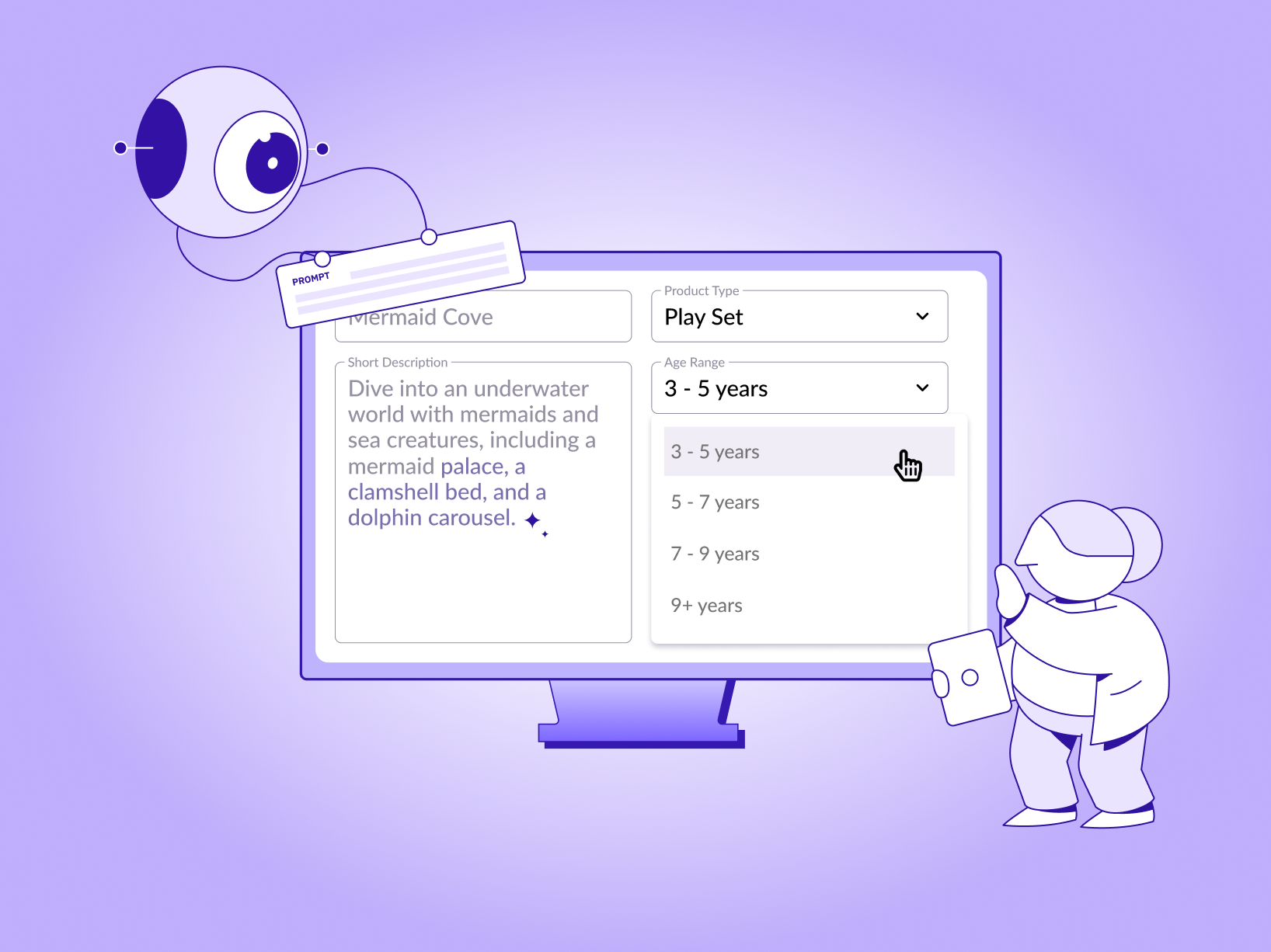

Automated product tagging is the process of using AI, specifically computer vision, natural language processing, and machine learning classifiers to assign structured labels to products without human entry for each item.

Instead of a merchandiser manually typing 'navy, cotton, slim-fit, casual' into fields for every new SKU, a trained model reads the product image, title, and description and automatically assigns those attributes.

The tags themselves are the structured metadata that describe a product: category, subcategory, color, size, material, style, occasion, brand, and any other attribute relevant to how shoppers search and how systems need to filter.

In a well-tagged catalog, every product has a consistent set of attributes applied through the same taxonomy, regardless of how it was described by the supplier or uploaded by the seller.

What makes automated tagging different from simple keyword extraction is the classification layer. A keyword extraction tool might pull 'blue' and 'slim' from a product title. An automated tagging system classifies those terms against a defined taxonomy mapping 'blue' to the correct color swatch in your attribute library, 'slim' to the fit category, and inferring from the image that the material is denim, even if the supplier didn't mention it. The model learns these classifications from labeled training data, which means the quality of its output is directly tied to the quality of the labels it trained on.

To understand why automated tagging is worth the effort, you need to see the role product tags play across search, navigation, recommendations, and catalog operations.

Pro tip: Automated tagging doesn't replace a taxonomy — it applies one. Before building or buying an automated tagging system, define your attribute taxonomy first: the categories, subcategories, and attribute fields that matter for your catalog. A model trained on a poorly defined taxonomy will produce well-structured garbage.

What is the Purpose of Product Tagging in e-commerce?

Product tags are the connective tissue between what a shopper is looking for and what you actually sell. They serve six distinct functions across an e-commerce operation, each of which fails when tagging is inconsistent or incomplete.

-

On-site search relevance

Search engines, including the one on your own site, match queries to products based on structured fields, not just text strings. When a shopper searches 'linen shirt women summer,' the search system looks for products with those exact attribute values, not products whose descriptions happen to contain those words somewhere. Products without consistent attribute tags return as irrelevant results or don't return at all, creating zero-result queries that send shoppers away. -

Filtered navigation and faceted search

The 'Filter by colour' or 'Filter by material' sidebars on category pages only work if every product in that category has those attributes tagged. A catalogue in which half the products have colour tags, and half don't, produces filter results that shoppers can't trust. They filter for 'navy' and get 60% of the relevant products. The other 40% are invisible because someone didn't tag them. -

Recommendation accuracy

Recommendation engines 'similar items,' 'customers also bought,' 'complete the look' work by matching attribute similarity between products. When two products that are genuinely similar have different attribute labels (one tagged 'trousers,' one tagged 'chinos'), the system treats them as different product types. Recommendation accuracy degrades directly from taxonomy inconsistency. -

SEO and external discoverability

Search engines index structured product data. Rich product attributes, colour, material, size range, and occasion feed into the schema markup that powers rich results in Google Shopping, image search, and AI-generated answers. Products with sparse or inconsistent tagging are less likely to surface in these channels, limiting organic traffic from high-intent shoppers. -

Catalogue operations and seller onboarding

Marketplaces and multi-seller platforms face a specific version of this problem: every seller uses different terminology. One seller calls it 'midnight blue,' another 'dark navy,' another 'dark blue.' Without automated tagging that normalises these into a single taxonomy value, the catalogue becomes fragmented by seller rather than organized by product. Data annotation for e-commerce and retail covers how structured labelling keeps catalogues consistent as seller volume and SKU count grow. -

Return rate reduction

When a product is misrepresented, has a wrong size guide, missing material information, or inaccurate colour, the customer who buys it is likely to return it. Correct, specific product tags set accurate expectations before purchase. Teams that invest in tagging quality consistently see return rates fall, particularly in apparel, furniture, and electronics, where attributes are the primary basis for purchase decisions.

When product tagging is done correctly, the benefits go beyond cleaner data, it directly affects sales, discoverability, and how efficiently your catalog performs.

The Impact of Effective Product Tagging on Sales and Visibility

The downstream effects of tagging quality are measurable, and they compound. A catalogue with clean, consistent attribute tags doesn't just rank better in search; it improves every AI system that runs on top of it.

Search relevance improves because the match between query terms and product attributes becomes more precise. Shoppers find what they searched for on the first page rather than abandoning the search. This directly lifts click-through rate on search results and reduces bounce from zero-result queries, one of the clearest signals that a catalogue has a tagging problem.

E-commerce Product tagging also improves recommendation quality because the similarity signals the recommendation engine uses are more accurate. When 'similar items' actually are similar, the click-through rate on recommendation carousels goes up. Cross-sell and upsell recommendations that are grounded in real attribute overlap, not just co-purchase history, perform better because they make sense to the shopper.

It improves catalog onboarding speed also. For platforms adding new sellers or seasonal inventory, manually tagging incoming SKUs is a bottleneck. Automated tagging cuts onboarding time from days to hours for standard product types, which means new inventory becomes discoverable faster. The compounding effect: products that take two weeks to onboard are invisible to shoppers for those two weeks. Products that onboard in hours start generating revenue immediately.

For a detailed breakdown of how labeling choices affect downstream search performance, this guide to AI-powered data labeling platforms covers the workflow decisions that determine whether automated annotation produces usable training data or compounds existing catalog inconsistencies.

The table below explains the difference between manual and automated product tagging.

Pro tip: The accuracy gap between automated and manual tagging is smallest for standard, high-volume product types and largest for niche, seasonal, or edge-case items. Build your automation program to handle the standard 80% at speed, and route the remaining 20% to human review rather than forcing the model into low-confidence territory.

To get these benefits consistently, e-commerce teams need a tagging process that can keep up with catalog growth, which is why the choice between manual and automated tagging becomes critical.

Product Tagging Challenges in E-Commerce

Most teams that have tried to scale e-commerce product tagging have run into the same problems. They're not unique to any one platform or catalog type; they're structural challenges that show up at scale regardless of approach.

-

Taxonomy drift

Taxonomy drift happens when the attribute definitions that were clear at the start of a tagging program gradually lose consistency as the catalog grows. 'Casual' means something different to different annotators. 'Midi' in skirt length means one thing in one category and something subtly different in another. Over time, the taxonomy becomes an approximation rather than a standard. Automated tagging amplifies this: a model trained on drifted labels will reproduce the drift at scale.

The fix is governance, not tooling. Taxonomy definitions need to be documented and versioned. Annotators and automated systems need to be calibrated against the same reference set periodically. When the taxonomy changes, and it will, as product types and customer language evolve, the labeling program needs a process for updating existing tags, not just applying new ones going forward. -

Multi-seller and multi-language catalogs

Marketplace platforms face a specific version of the consistency problem. Sellers upload product data in their own terminology, their own language conventions, and against their own category structures. Automated tagging that runs on raw seller input inherits all that variation. The system needs to normalise against your taxonomy, not just extract what's there, which requires training on examples of the mapping between supplier terminology and your internal attribute library.

Multi-language catalogs add another layer. A color that's 'azul marino' in Spanish and 'marine' in French needs to map to the same taxonomy value as 'navy' in English. This isn't translation, it's taxonomy normalisation across languages, which requires labeled training data in each language and human review of edge cases where the normalisation is ambiguous.

To fix this, treat taxonomy normalisation as a labeling problem, not just a tagging problem. Define a standard attribute library, then train the tagging system on labeled examples that map seller terms, regional variants, and different languages to those approved values. For multi-language catalogs, maintain labeled data for each language and send low-confidence cases to human review instead of forcing automatic classification. -

Edge cases and niche categories

Automated tagging models perform best on the product types they've seen many examples of. High-volume categories like t-shirts, shoes, and electronics have enough labeled examples that models can handle them reliably. Niche product types, heritage crafts, specialty tools, limited-edition collaborations often don't have enough training data for the model to be confident. Deploying automation on these categories without a human review layer produces a different kind of error than manual tagging: confident wrong answers rather than uncertain right ones.

To fix this, train models on high-volume categories first, and route niche or low-confidence items to human review. Expand training data gradually with labeled examples from edge cases instead of forcing full automation from the start. -

Training data quality

This is the challenge that most automated tagging vendors underplay. An AI tagging system is only as accurate as the labeled data it trained on. If the training data was produced by annotators who applied the taxonomy inconsistently, the model learns the inconsistency.

If the training data was labeled without domain expertise general annotators tagging specialist products the model learns imprecise classifications. The quality ceiling of any automated tagging system is set by the quality of its training data, not by the model architecture.

Choosing the right ecommerce annotation partner is often the decision that determines whether automation delivers on its promise or creates a new source of catalog debt.

To fix this, use trained annotators with clear taxonomy guidelines, review labeled samples regularly, and build training datasets with domain-specific expertise instead of generic labeling. High-quality annotation upfront leads to more accurate automation later.

How Taskmonk Supports Automated Product Tagging Programs

Most e-commerce teams that invest in automated tagging hit the same wall: the AI produces output quickly, but the output quality is only as good as the training data and building clean, consistent training data at catalog scale is where the program stalls.

Taskmonk is built to solve exactly that part.

-

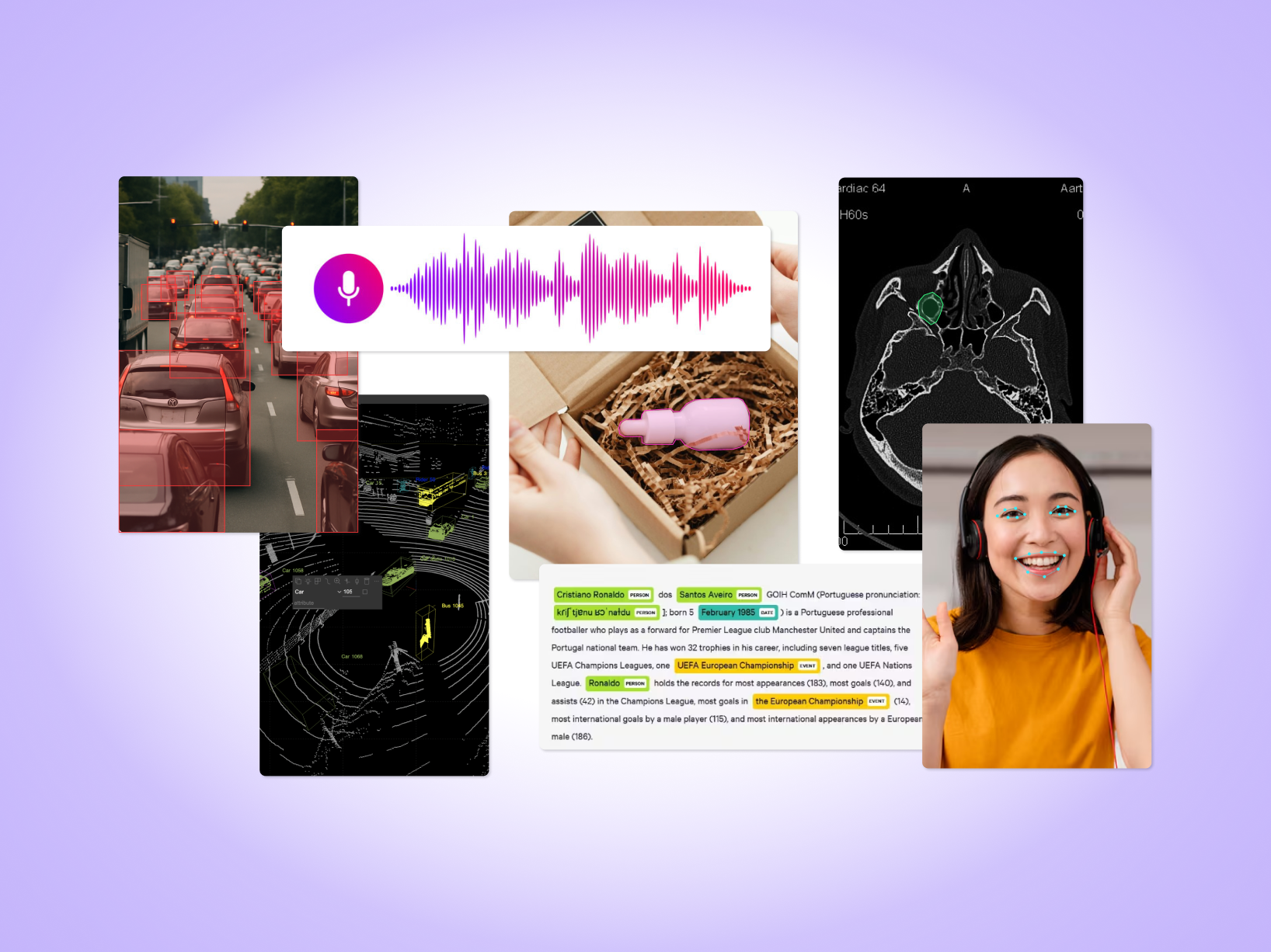

[POV] Attribute tagging and taxonomy standardisation.

Taskmonk's e-commerce annotation workflows handle the extraction and standardisation of product attributes, size, color, material, specifications against a defined taxonomy with enforced quality checks. The output feeds directly into automated tagging models as training data or runs as the annotation layer itself for catalogs that need human-verified labels. -

[POV] Product image and UGC labeling.

For visual tagging models that classify from product images, Taskmonk provides bounding boxes and polygon labels using category-specific checklists and visual examples. This is the labeled image data that trains visual search, similar-item retrieval, and image-to-attribute extraction models, the ones that can tag a product from a photo, even when the text description is thin. -

[POV] Search relevance and intent labeling.

Taskmonk labels search queries by intent, maps synonyms and adjective-attribute relationships, and grades search results to eliminate zero-result queries. For e-commerce teams whose automated tagging improvement directly targets search performance, this is the layer that connects the product attribute taxonomy to how shoppers actually phrase queries, including regional language variations and common misspellings. -

[POV] Scale and delivery.

Taskmonk has saved clients $4M+ through efficiency gains and faster catalog onboarding. The annotator network of 7,500+ is trained specifically for e-commerce tasks including product taxonomy, attribute tagging, catalog enrichment, and text/image labeling. Weekly SLAs, 99.9% uptime on Azure, and a no-code workflow builder mean new annotation tasks can be set up and running in 24-48 hours. For marketplace teams adding seller inventory at speed, that turnaround matters.

If you want to see what Taskmonk's annotation output looks like on your catalog data, the ecommerce annotation page covers the specific workflows and a path to a proof-of-concept with sample data.

Conclusion: The Future of Product Tagging and AI Solutions

Automated product tagging isn't a future capability, it's a present requirement for any e-commerce operation managing more than a few thousand SKUs. The teams that are ahead on this aren't necessarily using more sophisticated models. They've invested earlier in clean training data, defined taxonomies with real governance, and human review layers for the edge cases that break automation.

What's changing is the speed at which AI can handle the standard workload, which raises the bar for what's expected of the human layer. The human role in product tagging is shifting from bulk attribute entry to training data curation, taxonomy governance, and quality review on low-confidence outputs. That shift doesn't reduce the need for human expertise — it concentrates it where it matters more.

The catalogs that will perform best in search, recommendations, and AI-generated shopping experiences over the next few years are the ones being built on clean, consistent, well-governed product data today. The window to build that foundation while competitors are still running on messy catalogs is narrower than it looks.

FAQs

-

What is automated product tagging?

Automated product tagging is the use of AI computer vision, NLP, and ML classifiers to automatically assign structured attributes to products in an e-commerce catalogue. Instead of a human manually entering color, material, size, and category for each SKU, a trained model reads the product image and text and applies the correct taxonomy values. The model's accuracy depends on the quality and consistency of the labeled training data it learned from. -

What is the best AI to automate product tagging in e-commerce?

There's no single best tool — the right choice depends on your catalog type, taxonomy complexity, and whether you need a platform, a managed service, or both. Visual search platforms like Syte and Pixyle specialize in fashion and apparel. General-purpose catalog enrichment platforms include Zoovu and Vue.ai. For teams that need annotation infrastructure to train or improve their own models — or that need human-verified labeling at scale — Taskmonk provides the training data and annotation workflows that feed automated tagging systems. Most production-grade programs use a combination: automation for standard high-volume product types, and human-in-the-loop review for niche categories and edge cases. -

What are automatic tags in e-commerce?

Automatic tags are the product attributes assigned by an AI system rather than entered manually. They cover the same fields as manual tags — category, color, size, material, style, occasion, brand — but are generated by a model that reads the product image, title, and description and classifies them against a predefined taxonomy. The difference from simple keyword extraction is that the model maps what it reads to the specific values in your taxonomy, normalizing different supplier terminology into consistent catalog labels. -

How does automated product tagging affect SEO?

Directly and significantly. Search engines index structured product data and use it to surface products in rich results, Google Shopping, and AI-generated shopping responses. Products with complete, accurate attribute tags feed into schema markup more effectively, improve relevance matching for long-tail search queries, and reduce the 'thin content' signals that hurt organic rankings. The teams seeing the biggest SEO lifts from automated tagging are usually the ones fixing zero-result queries first: products that weren't surfacing for specific attribute searches because the tags were missing. -

What are product tags in e-commerce?

Product tags are the structured labels attached to a product in an e-commerce catalog that describe its attributes. They include category and subcategory (what type of thing it is), visual attributes (color, size, material, pattern), functional attributes (occasion, fit, compatibility, specifications), and sometimes behavioral signals (trending, seasonal, bestseller). Together they form the metadata that powers on-site search, filtered navigation, recommendation engines, and external search engine visibility. The more complete and consistent the tags, the better every downstream system that depends on them performs.

.png)

.png)