TL;DR

Object detection with deep learning enables an AI model to identify objects and determine their locations using bounding boxes. Unlike image classification, which assigns a single label to the entire image, object detection can recognise multiple objects and pinpoint where they appear. Modern models such as YOLO, Faster R-CNN, SSD, and transformer-based detectors analyse images, extract visual features, and predict both image category and their spatial positions.

However, successful deep learning for object detection is not only determined by model architecture, but data quality and diversity play an important role in determining how well the model performs. Accurate annotations, balanced datasets, and real-world variability are essential for building a reliable detection system that can operate effectively in industries like healthcare, retail, manufacturing, and autonomous systems.

Introduction

Not long ago, machines that could “see” & “learn” were mostly confined to science-fiction.

Today, computer vision systems powered by deep learning unlock our phones using our faces, factories run visual inspections without a blink, and hospitals use AI to detect anomalies in scans before humans take a look.

Welcome to the era of object detection machine-learning.

With deep learning for object detection, AI models can detect tumours in healthcare, shelf inventory in retail, spot manufacturing defects, and monitor traffic in smart cities.

Deep learning for object detection is not only about recognising that something exists in an image but pinpointing where it exists, often in milliseconds.

What makes deep learning special is its neural networks & the ability to learn visual patterns from data, unlike handcrafted rules of traditional computer vision. The former is a way smarter, faster, and more scalable detection system than the latter.

This is due to the fact that instead of being told what a car looks like, they study thousands of labelled data examples and figure it out themselves.

But the tricky part here is that, though they look brilliant in a testing environment, things change in production.

Why?

Because data quality is overlooked compared to architecture and model selection.

The difference between a flashy demo and a production-ready system often comes down to one thing: data quality.

And this leads us to a real question

What makes image detection with deep learning work in production?

This guide covers how deep learning of object detection works, what its challenges are, and how to improve it. Let’s first understand deep learning for object detection.

What is object detection?

Object detection is a computer vision capability that enables AI systems to identify objects in images and determine their exact locations.

Think of it this way, if you show a machine a busy street photo, object detection with machine learning enables it to say things like;

- There is a car here

- A pedestrian is crossing here

- A traffic signal is on the right

It doesn’t just recognise that objects exist, it draws bounding boxes around them and assigns the right labels.

Object detection covers two main problems.

- Classification

- Localization

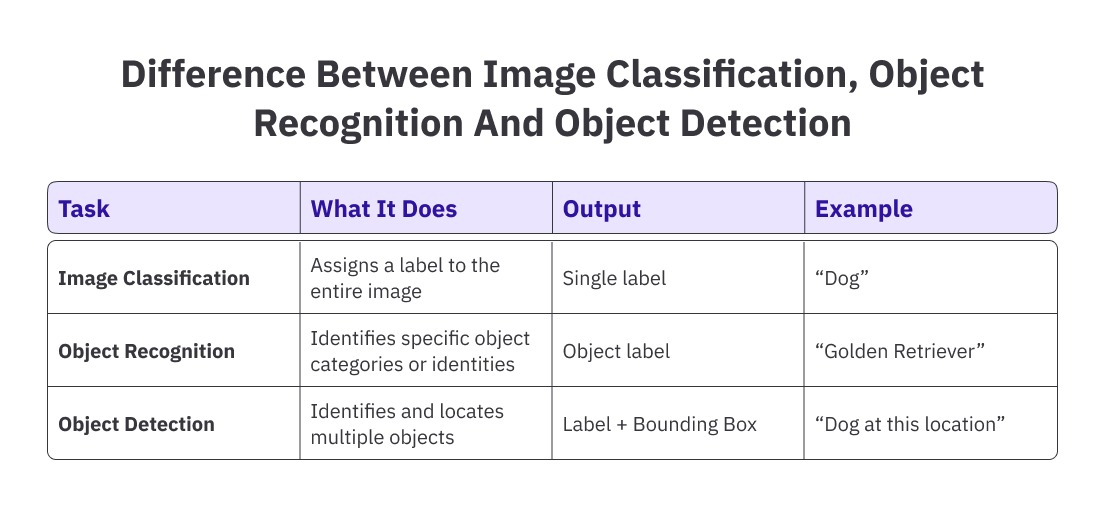

Because it solves both simultaneously, object detection wth image classification is more complex than image classification.

Here is the fundamental difference between image classification and image detection.

Here is how object detection has evolved.

Modern object detection systems are the result of years of technological progress. The journey of traditional computer vision methods to deep learning models shows how the field has matured.

To better understand how object detection with machine learning has progressed over time, the following table highlights the key stages in its evolution.

How Deep Learning of Object Detection Works

Object detection with deep learning allows a machine to analyze an image, identify objects within it, and determine their locations. Behind this capability is a structured learning pipeline where neural networks process images, extract visual patterns, and gradually learn to predict object categories and bounding boxes.

To understand how object detection works, imagine what happens when an image passes through a deep learning model.

-

Image enters the neural network

Every image is essentially a grid of pixels. When the image enters the model, those pixels are converted into numerical values that the neural network can process.

The model does not initially understand objects. Instead, it begins by analysing basic visual patterns in the image.

This process is handled by a Convolutional Neural Network (CNN), which scans the image using filters that detect patterns. -

The model extracts the visual features

As the image moves through the layers of the CNN, the network gradually builds a deeper understanding of the visual content.

In the early layers, the model detects simple features such as edges, corners, and gradients. These basic elements are the building blocks of all visual structures.

In the middle layers, the model begins combining these simple features into shapes and textures. At this stage, it may detect patterns such as wheels, windows, faces, or surface textures.

In the deeper layers, the model combines all these learned features to recognise complete objects such as cars, people, animals, or defects in manufactured products.

This layered learning process is known as hierarchical feature extraction, and it allows the model to move from raw pixels to meaningful objects. -

The model looks for possible object locations

Once the model understands the visual features in the image, it must determine where the objects might be located.

Many object detection models divide the image into a grid of smaller regions. Each grid cell becomes responsible for detecting objects whose centre falls within that region.

To help detect objects of different shapes and sizes, the model also uses predefined reference boxes called anchor boxes. These anchor boxes represent common object dimensions.

The model then predicts how each anchor box should be adjusted so that it aligns with the actual object in the image. -

Predicting object classes and locations

For every potential object region, the model makes three key predictions: First, it predicts the object class, such as car, pedestrian, product, or defect. Second, it predicts the bounding box coordinates, which determine the object's exact location in the image. Third, it predicts a confidence score that represents how certain the model is about the detection.

These predictions are generated by the detection head, which processes the visual features extracted by the backbone network. -

Refining Bounding Boxes

To accurately locate objects, the model uses a technique known as bounding box regression.

Instead of guessing the object location directly, the model predicts adjustments to the anchor boxes. These adjustments refine the position, width, and height of the box so that it tightly surrounds the object.

During training, the predicted bounding boxes are compared with the ground-truth boxes provided in the annotated dataset. The model measures how closely the predicted boxes overlap with the real objects using metrics such as Intersection over Union (IoU).

The training objective is to maximise this overlap, ensuring that predicted boxes accurately represent object boundaries. -

Learning through iterative training

Object detection models improve through repeated training cycles.

During each training step, the model makes predictions on labelled images. The difference between the predicted outputs and the annotated ground truth is calculated using a loss function.

This loss measures two things: whether the model correctly identified the object category and whether it predicted the bounding box location accurately.

Using a process called backpropagation, the model updates its internal weights to reduce this loss. Over thousands of training iterations, the model gradually becomes better at detecting objects and locating them precisely. -

Evaluating and deploying a model

Once training is complete, the model is evaluated using metrics such as mean Average Precision (mAP), precision, recall, and IoU thresholds. These metrics measure how accurately the system detects objects across different scenarios.

After achieving acceptable performance, the model can be deployed in real-world systems such as healthcare diagnostics, retail analytics, industrial inspection, or autonomous navigation.

In production environments, models are often optimised for faster inference so they can operate in real time on cloud infrastructure or edge devices.

Roles of Data in Object Detection with Deep Learning

For object detection with deep learning, if the model architecture is the engine of object detection, data is the fuel.

You can experiment with YOLO, Faster R-CNN, transformers, and anchor-free methods, but no matter how advanced the model is, its performance is always limited by the quality, structure, and diversity of the data it learns from.

Deep learning does not invent knowledge. It learns patterns from what it is shown.

That makes the data the most critical component in any object detection system.

Let’s see why:

-

Data Defines What the Model Learns

Object detection models learn by comparing predicted outputs with labelled ground truth. During training, the model repeatedly adjusts its internal parameters to minimise the difference between predicted results and annotated data.

This means the dataset acts as the model’s teacher.

If the training data contains errors, inconsistencies, or missing examples, the model absorbs those patterns as if they were correct. Unlike rule-based systems, deep learning models cannot distinguish between correct instructions and flawed data.

For instance, if bounding boxes are loosely drawn, the model learns imprecise spatial boundaries. If certain objects are frequently missed during annotation, the model may learn to ignore them entirely. If labels are inconsistent across annotators, the model receives conflicting signals about object categories.

Over time, these issues create a ceiling on performance. No matter how much the model is tuned or retrained, its predictions cannot exceed the quality of the data it was trained on.

.jpeg)

-

Annotation Quality Shapes Detection Accuracy

In object detection, image annotation is the process that transforms raw images into structured training data. Each annotated image includes the object’s class label and the coordinates of the bounding box that define its location.

These annotations provide the spatial guidance that helps the model understand where objects appear in an image.

However, the effectiveness of this guidance depends heavily on annotation precision and consistency. Bounding boxes must tightly enclose objects so the model can learn accurate localisation. If boxes are too large or inconsistently drawn, the model struggles to learn precise object boundaries.

Clear annotation guidelines are also necessary to handle complex scenarios such as partially visible objects, overlapping objects, and blurred images. When multiple annotators label data, consistent interpretation becomes essential. Even small variations in annotation style can introduce noise that affects model training.

In large datasets, annotation quality becomes one of the strongest predictors of detection performance. -

Dataset Diversity Determines Real-World Reliability

While annotation precision is important, the dataset must also represent the variability of real-world environments.

Objects rarely appear in identical conditions. Lighting changes throughout the day, weather affects visibility, camera angles vary, and objects may appear partially hidden or surrounded by clutter.

If training data only includes ideal conditions, the model will struggle when deployed in more complex environments.

A robust dataset must include variation in object sizes, viewing angles, backgrounds, lighting conditions, and occlusions. This diversity allows the model to learn generalizable patterns rather than memorizing narrow scenarios.

Without sufficient diversity in the training data, object detection systems perform well during testing but fail when exposed to new environments. -

Scaling Data for Production Systems

A proof-of-concept object detection model may be trained on a few thousand annotated images. However, production systems typically require much larger datasets to capture the variability present in real-world environments.

As object detection systems move toward deployment, dataset sizes often expand to tens or even hundreds of thousands of labelled images.

Scaling datasets introduces operational challenges. Multiple annotators must follow consistent labelling guidelines. Annotation workflows must be coordinated to maintain quality across large volumes of data. Dataset versioning and management processes must track updates over time.

Without structured processes, scaling annotation efforts can introduce inconsistencies that reduce model reliability. -

Continuous Data Improvement

Object detection systems do not remain static after deployment. Real-world environments constantly introduce new scenarios that the model has not previously encountered.

For example, new product packaging may appear in retail stores, different lighting conditions may emerge in surveillance environments, or previously unseen defects may appear in manufacturing processes.

Capturing these new examples and incorporating them into the training dataset is essential for maintaining detection accuracy. This feedback loop, where model errors are analysed and used to refine the dataset, allows the system to continuously improve over time.

.jpeg)

Object Detection Models Explained in Depth

Over the years, several architectures have shaped the evolution of object detection with deep learning. While they all aim to solve the same core problem: identifying objects and localising them with an image, they differ in how they approach it.

- Some prioritise speed

- Some prioritise accuracy

- Some attempt to balance both

Understanding these models gives you clarity about why architectural choice matters and why data quality still remains the underlying driver of performance.

Let’s explore the major one.

-

YOLO(You Only Look Once)

YOLO fundamentally changed object detection by introducing a single-stage detection approach that treats detection as a unified regression problem.

Instead of generating region proposals and then clarifying them separately, YOLO processes the entire image in one pass through the network. The image is divided into grid cells, and each grid cell predicts bounding boxes along with class probabilities.

These single-shot designs make YOLO extremely fast. It can perform real-time detection, which makes it ideal for approaches such as

- Autonomous vehicles

- Surveillance systems

- Drone navigation

- Live video analytics

The strength of YOLO lies in speed and global reasoning. Because it sees the entire image in one pass through the network. The image is divided into grid cells, and each grid cell predicts bounding boxes along with class probabilities.

However, early versions of YOLO struggled with detecting very small objects and handling crowded scenes. Later iterations significantly improved these limitations through better feature pyramids and multi-scale prediction strategies.

In short, YOLO optimised for real-time, high-speed detection with competitive accuracy. -

Faster R-CNN

Faster R- CNN represents the two-stage detection paradigm and is often associated with higher accuracy, particularly in complex scenes.

It operates in two steps.”

First, a region proposal network scans the image to identify potential object regions. These are candidate boxes that might contain objects.

Second, these proposed regions are passed through a classifier that determines the object category and refines the bounding box coordinates.

Because it separates region generation from classification, Faster R-CNN tends to produce more precise detection, especially for small or densely packed objects.

The tradeoff is computational cost. Two-stage detection requires more processing time compared to single-stage models like YOLO.

Faster R-CNN is often preferred in domains where accuracy is more important than speed, such as

- Medical imagining

- Research applications

- Industrial inspection systems

- It emphasises precision over real-time performance.

-

Single shot detector(SSD)

SSD was developed to bridge the gap between speed and accuracy. Like YOLO, SSD is a single-stage detector. It eliminates the region proposal step and directly predicts bounding boxes and class scores in one forward pass.

What differentiates SSD is its use of multi-scale feature maps. It performs detection at different layers of the network, allowing it to better detect objects of varying sizes.

Early layers detect smaller objects, while deeper layers detect larger ones.

This makes the SSD more balanced compared to early YOLO versions. It achieves a relatively high speed while maintaining reasonable detection accuracy.

SSD became particularly popular for mobile embedded systems because it offers a practical compromise between computational efficiency and performance. -

Transformer-based detector

Transformer-based object detectors represent a newer generation of architectures that rethink detection without traditional anchors or region proposals.

Models like DETR use attention mechanisms instead of conventional heavy pipelines. Attention allows the model to focus on relevant parts of the image by modeling long range dependencies directly.

Unlike traditional detectors that rely on anchor boxes and grid-based predictions, transformer-based detectors treat object detection as a direct set prediction problem.

This simplifies the pipeline by

- Removing hand-designed anchor boxes

- Eliminating non-maximum suspension in some cases

- Enabling end-to-end training

However, transformer-based detectors typically require a larger dataset and more computational resources to train effectively. They also may converge more slowly compared to CNN-R-based models.

These models are promising for the future of object detection, particularly in large-scale or multi-modal systems.

Real-world Applications for Deep Learning for Object Detection

Object detection with deep learning is no longer confined to research labs. It is actively transforming industries by turning visual data into structured, actionable intelligence.

Across industries, applications are both practical and high impact.

-

Healthcare

In healthcare, object detection plays a crucial role in medical imaging and diagnostics.

Detecting anomalies like tumours, fractures, lesions, or abnormal growth patterns requires precision and consistency. Deep learning based detection systems assist clinicians by identifying suspicious regions and highlighting them for future examination.

Instead of manually scanning every pixel in an image, doctors can focus their attention on AI-flagged areas. This improves efficiency and can support earlier detection of critical conditions.

Beyond radiology, object detection is also used in

- Pathology side analysis

- Surgical assistance system

- Monitoring patient movement in ICU settings

- Automated medical inventory tracking

-

Retail

Retail stores today are powered by computer vision systems that rely on object detection. In physical stores, cameras can monitor shelves and detect out-of-shelf items in real time. Instead of relying solely on manual checks, retailers can automatically identify gaps and trigger replenishment workflow.

Object detection also enables

- Automated checkout systems

- Loss prevention and theft detection

- Customer behaviour analysis

- Planogram compliance monitoring

For example, detecting whether products are placed on the correct shelf can improve merchandising efficiency. Identifying product misplacement or low inventory can directly impact revenue.

Retail environments are dynamic and unpredictable. Lighting changes, crowded scenes, overlapping objects, and packaging variations make detection challenging. For reliable deployment, a robust data set that captures variation is crucial. -

Manufacturing

In manufacturing, object detection is integrated into quality control systems. High-speed production lines generate thousands of products per hour. Manual inspection tends to be time-consuming and prone to error. Deep learning models can detect errors such as cracks, dents, surface irregularities, or missing components with high consistency.

Object detection systems in manufacturing are often used for

- Surface defect detection

- Assembly verification

- Consulting components

- Ensuring packaging integrity

However, manufacturing environments often present unique challenges such as reflective surfaces, varying material textures, and small defect sizes. These require highly precise annotations and diverse training data to achieve reliable detection accuracy. -

Autonomous systems

Autonomous systems represent one of the most demanding applications of object detection.

In autonomous vehicles, drones, and robotics, object detection is fundamental to decision-making. The system must continuously detect pedestrians, vehicles, road signs, obstacles, and lane markings in real time.

In these systems, detection is not just about recognition; it influences navigation and safety.

For example, a self-driving car must not only detect pedestrians but also determine their exact location and distance to calculate braking actions.

An autonomous system requires

- Real-time inference

- High precision under varying lightning and weather conditions

- Robust performance in crowded environments

Failure to detect or incorrectly localise objects can result in severe consequences. Therefore, training data must include diverse environmental conditions such as night scenes, rain, fog, and occlusions.

Challenges in Object Detection

Object detection with deep learning has advanced dramatically over the last few years. Though models are faster, more accurate, and more deployable than ever before, building a reliable object detection system remains one of the most complex tasks in computer vision.

The challenge here is not just about detecting images but detecting them accurately, consistently, and in unpredictable & evolving real-world environments.

Let’s explore the key challenges the teams encounter when moving from experimentation to production:

-

Small object detection

Detecting small objects is harder than detecting bigger ones. When objects occupy only a tiny portion of an image, they contain fewer pixels and less visual information. This makes it difficult for the model to distinguish them from background noise.

For example, identifying distant pedestrians in a street scene or detecting a microcrack on a manufactured surface requires high-resolution inputs and a well-designed feature extraction layer. Even a slight inconsistency in annotation can severely impact small object detection performance. -

Occlusion and overlapping objects

Real-world scenes are rarely clean. Objects overlap, partially block each other, or appear behind other elements.

A pedestrian partially hidden behind the vehicle or product stacked closely on a shelf introduces ambiguity. The model must learn to recognise incomplete visual cues while still predicting accurate bounding boxes.

Without sufficient examples of occluded objects in the training data, detection performance degrades quickly in such a scenario. -

Variation in lightning and the environment

Object detection often struggles when deployed environments differ from their training data. Slight changes in lightning, shadows, reflections, weather conditions, or camera angles can significantly alter how objects appear. A model trained primarily on daytime images may underperform in nightmare conditions. Similarly, glare on a metallic surface can confuse the model’s defect detection system.

This is one of the most common reasons for the models not performing well in controlled testing, failing in real-world deployment. -

Class imbalance and rare events

In many practical applications, some object classes appear far more frequently than others. This creates class imbalance. For example, in traffic detection, though cars may appear thousands of times, bicycles appear only occasionally. In medical imaging, abnormal cases may be rare compared to normal scans. Models trained on imbalanced datasets often become biased toward dominant classes and fail to detect rare but critical objects. Addressing this requires careful dataset design and augmentation strategies. -

Real-time performance constraints

In applications like autonomous driving or live surveillance, detection needs to happen in real time. Balancing speed and accuracy is a technical challenge; a complex model may deliver higher accuracy but introduce latency, which would make it impractical for real-time use.

Optimising models for deployment on edge devices adds more constraints related to memory usage and computational efficiency.

How to Improve Object Detection Performance

Improving object detection performance is rarely about a single adjustment. It is the result of systematic refinement across data, model architecture, training strategy, and deployment feedback.

Many teams initially assume that switching to a newer model architecture will dramatically improve results. While architecture matters, sustainable performance gains usually come from improving the entire pipeline, especially the data layer.

Let’s explore the key areas that improve detection performance.

-

Improve Data Quality and Diversity

The most impactful improvement often begins with the right dataset. A model trained on limited or inconsistent data cannot generalise well. Expanding dataset diversity in terms of lighting conditions, object scales, camera angles, and environmental contexts directly strengthens robustness.

Including real-world edge cases such as motion blur, occlusion, crowded scenes, and low-light conditions ensures the model is prepared for the realities of deployment environments. When performance drops in production, reviewing failure cases and incorporating them into retraining cycles is one of the most effective strategies for continuous improvement.

If the model struggles with certain scenarios, the solution is often to show more representative examples. -

Refine Annotation Precision

Object detection machine learning is highly sensitive to annotation quality. Tight, consistent bounding boxes help the model learn accurate localisation. Even small variations in how annotators draw boxes can affect regression performance.

Establishing clear annotation guidelines and reviewing annotations through multi-stage quality-control processes reduces noise in the dataset. Establishing consistency across annotators is also critical. If two people label the same image differently, the model receives conflicting signals.

Improving annotation precision often results in measurable improvements in localisation accuracy without changing the architecture. -

Address Class Imbalance

When certain object classes dominate the dataset, the model naturally becomes biased toward them. Rare classes often suffer from poor recall.

Improving performance requires either collecting more samples of underrepresented classes or applying techniques such as weighted loss functions and targeted data augmentation.

Balancing the dataset ensures that the model allocates learning capacity appropriately across all categories, especially those that are operationally critical. -

Apply Data Augmentation Strategically

Data augmentation artificially increases dataset diversity by applying controlled transformations such as rotation, scaling, flipping, cropping, and brightness adjustments.

These techniques simulate real-world variability and help the model generalise better. Advanced augmentation strategies, such as mosaic augmentation or random erasing, can further strengthen robustness.

However, augmentation must be applied thoughtfully. Unrealistic transformations can introduce artefacts that confuse the model rather than help it. -

Optimise Training Parameters

Hyperparameters play a significant role in model performance. Adjusting learning rates, batch sizes, anchor configurations, and optimisation strategies can improve convergence and stability. Transfer learning, initialising a model with pretrained weights from large datasets, can accelerate learning and improve accuracy, especially when labelled data is limited.

Monitoring validation metrics carefully helps prevent overfitting and ensures that improvements are genuine rather than dataset-specific.

What to Look for in an Annotation Platform

When selecting an annotation partner for object detection projects, teams typically evaluate four capabilities:

-

Annotation Accuracy

Deep learning for Object detection models is highly sensitive to bounding box precision. Even small inconsistencies in annotation can reduce localisation accuracy.

A reliable platform should provide:

- Clear annotation guidelines

- Experienced annotators trained in computer vision tasks

- Multi-layer quality assurance workflows

- Consistent labelling across large datasets

-

Scalable Dataset Production

While prototype models may train on a few thousand images, production systems often require hundreds of thousands of annotated samples.

Annotation platforms must support:

- Large distributed annotation teams

- Scalable labelling workflows

- Dataset management infrastructure

- Rapid dataset expansion as models evolve

-

Domain-Specific Expertise

Different industries require specialised annotation knowledge.

For example:

- Healthcare datasets require medical domain understanding

- Manufacturing datasets require defect-level precision

- Retail datasets require detailed product labelling

Platforms that provide domain-trained annotators can significantly improve dataset reliability.

Building High-Quality Object Detection Datasets with Taskmonk

For AI teams deploying computer vision systems, managing the entire data pipeline internally can be difficult.

Platforms like Taskmonk provide managed data annotation workflows designed specifically for training machine learning models.

-

Generate High-Quality Bounding Box Annotations

Precise bounding boxes are critical for training accurate object detection machine learning models.

Taskmonk provides:

- Trained annotators specialized in computer vision tasks

- Detailed annotation guidelines

- Multi-layer review processes

- Consistent labelling across large datasets

-

Scale Object Detection Datasets Efficiently

Object detection machine learning systems rarely succeed with small datasets.

Production models often require hundreds of thousands of annotated images.

Taskmonk helps teams:

- scale annotation workflows quickly

- manage large annotation teams

- accelerate dataset expansion

- maintain consistent annotation standards across projects

-

Maintain Quality at Scale

As annotation projects grow, maintaining consistency becomes increasingly difficult.

Taskmonk uses structured quality assurance workflows, including:

- Multi-stage annotation review

- Validation sampling

- Dataset quality checks

- Feedback loops between ML teams and annotators

-

Support Complex Computer Vision Use Cases

Object detection datasets vary widely across industries.

Taskmonk supports annotation for use cases such as:

- Retail shelf monitoring

- Autonomous vehicle perception

- Manufacturing defect detection

- Medical imaging analysis

Conclusion

Object detection with deep learning is powering real-world systems across healthcare, retail, manufacturing, and autonomous technologies. But while model architectures continue to evolve, one principle remains constant:

Data drives performance.

The most accurate detection systems are built on diverse, well-annotated, quality-controlled datasets that reflect real-world complexity.

If your model isn’t performing as expected, the answer may not lie in changing the architecture; it may lie in improving the data pipeline.

Focus on data quality.

Standardise annotation.

Build continuous feedback loops.

That’s how object detection moves from an impressive demo to a reliable production system.

FAQs

-

What is object detection in deep learning?

Object detection in deep learning is a computer vision task where a trained neural network identifies objects in an image and determines their exact locations using bounding boxes.

Unlike basic recognition, it answers two questions at once:

What objects are present?

Where exactly are they located?

The model outputs class labels (such as car, person, defect) along with coordinates that define the object’s position in the image. -

How is object detection different from image classification?

Image classification assigns a single label to an entire image. It tells you what the image contains, but does not specify where the object is.

Object detection, on the other hand, identifies multiple objects within the same image and provides their locations using bounding boxes.

In simple terms:

- Classification says, “This is a dog.”

- Detection says, “There is a dog here, and a ball there.”

- Detection combines classification with localisation.

-

What are the main types of object detection algorithms?

Object detection algorithms are generally divided into two categories:

One-stage detectors such as YOLO and SSD directly predict bounding boxes and class labels in a single pass through the network. They are faster and suitable for real-time applications.

Two-stage detectors, such as Faster R-CNN, first generate region proposals and then classify those regions. They typically offer higher accuracy but are slower.

Newer approaches also include transformer-based detectors that use attention mechanisms to model object relationships across the entire image. -

What are some real-world applications of object detection?

Object detection is widely used across industries.

In healthcare, it helps detect tumours and abnormalities in medical images.

In retail, it enables automated checkout and shelf monitoring.

In manufacturing, it identifies product defects on assembly lines.

In autonomous systems, it detects pedestrians, vehicles, and obstacles for navigation and safety.

Any system that needs to interpret visual scenes and take action based on object location relies on object detection.

.jpeg)

.png)