Autonomous AI Systems: The Future of Self-guided Intelligence

TL;DR

Autonomous AI systems are AI-powered software systems designed to complete tasks end-to-end rather than simply generate responses.

This approach shifts AI from passive assistance to operational execution, enabling systems to resolve tickets, update records, trigger workflows, and monitor processes across multiple business applications.

This distinction is what makes autonomous AI systems valuable for operational workflows like:

- customer support automation

- IT service management

- finance reconciliation

- sales operations

- internal knowledge workflows

In these environments, most work is not a single decision but a chain of actions across multiple systems.

Autonomous AI systems coordinate those actions without requiring constant human supervision.

However, successful deployment requires strong governance, high-quality training data, guardrails, and observability frameworks to ensure safe and reliable automation.

Introduction

AI has already made work faster in small ways for everyday tasks: drafting responses, summarizing tickets, classifying documents, and answering internal questions.

Useful, but still mostly passive. Autonomous AI systems change the fundamental operating model of AI.

Instead of stopping at a suggestion, they can execute multi-step workflows: gather context, update records, trigger workflows, follow up, and close loops across tools.

That’s why “autonomous” is becoming the next frontier of agentic AI. It’s not about a single model being dramatically smarter, but building a system that can plan and execute safely inside real business constraints.

In this guide, we’ll break down what autonomous AI systems are, how autonomous AI works under the hood, and where the benefits show up fastest, while also covering the governance and data foundations you need to get autonomy right.

What are autonomous AI systems?

Autonomous AI systems are AI-driven software systems that can pursue a goal end-to-end by planning steps, taking actions in tools or applications, checking results, and adjusting their approach with minimal human input.

Instead of answering a single prompt like a chatbot, an autonomous AI system operates as a continuous decision loop:

goal → plan → execute → evaluate → iterate.

A simple way to think about it:

- A traditional AI model produces an output (a prediction, a label, a summary).

- An autonomous AI system produces an outcome (a resolved ticket, a completed workflow, an updated record, a generated report that is also delivered and tracked).

Autonomous AI system vs autonomous AI agent

You will often see the terms “autonomous agents” or “autonomous AI agents.” An agent is usually the unit that performs tasks, like a worker.

An autonomous AI system is the full setup that makes the agent usable in real life: the agent plus the tools it can access, the permissions it has, the memory it uses, the rules it must follow, and the monitoring that keeps it safe.

So agents are the doers. Systems are the production-grade environment that makes doing reliable.

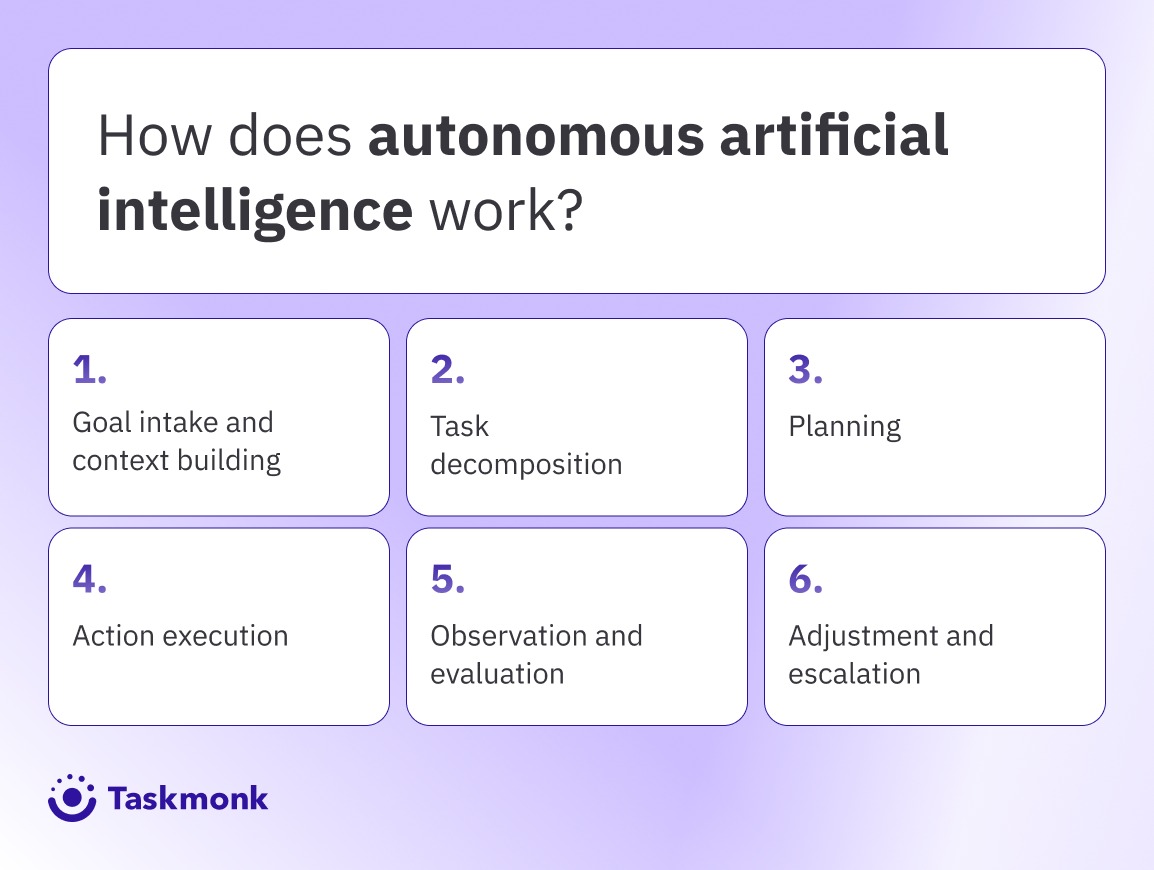

How does autonomous artificial intelligence work?

Autonomous artificial intelligence works by running a closed-loop control system around an AI model. Instead of generating a single response, it repeatedly:

- understands the goal and context

- plans a sequence of actions

- executes those actions through tools

- evaluates whether it’s getting closer to the goal

- adapts the plan until it reaches a stop condition (done, blocked, or escalated)

This loop is what turns “AI that talks” into “AI that does”.

-

Goal intake and context building

The system starts with a goal (for example: “resolve this ticket” or “reconcile invoices”). It then pulls the context it’s allowed to use: history, policies, account state, and recent actions. Strong systems retrieve only what’s relevant, not everything. If critical context is missing, they pause or escalate rather than guess. -

Task decomposition

The goal is split into smaller tasks that the system can execute safely. This includes identifying missing inputs, dependencies, and what can run automatically vs what needs approval. Mature agents build a short checklist of “must-verify” items before taking action. Weak agents skip this and jump straight into tool calls. -

Planning

Planning decides the route: which tools to use, in what order, and what success looks like at each step. A practical plan includes stop rules for when to ask a human, when to retry, and when to halt. Better systems re-plan based on outcomes rather than sticking to a rigid script. This is where autonomy becomes controlled execution. -

Action execution

The system executes through tool calls: APIs, databases, CRMs, ticketing systems, workflow engines, or controlled code environments. Actions can be external (e.g., updating a record, triggering a workflow) or intermediate (e.g., producing a structured summary for the next step). In production, permissions are scoped tightly, and every action is logged. Tool design often decides whether the system is safe. -

Observation and evaluation

After each action, the system checks whether it moved closer to the goal. Validation can be rule-based (status changed, constraints met), evaluator checks, or comparisons to known ground truth. If the evaluation is weak, the agent can loop, drift, or take confident wrong turns. Reliable evaluation is what makes autonomy repeatable. -

Adjustment and escalation

Based on the evaluation, the system proceeds, retries with a different approach, asks for clarification, or escalates to a human. Learning is typically split between short-term memory for the current task and offline improvements over time. The key is controlled adaptation, not uncontrolled self-modification. In mature setups, escalation is a feature, not a failure.

Impact of autonomous artificial intelligence

The impact of autonomous artificial intelligence is not that it makes AI “smarter.” It changes where AI sits in the workflow. Instead of supporting humans, it can execute, moving work across tools, coordinating steps, and closing loops.

That shift creates real operational upside, but also introduces new categories of risk that teams need to manage intentionally.

Let’s have a look at the most important impacts you’ll actually feel after deployment:

-

Faster workflows

The most visible impact is speed across multi-step work. Autonomous AI systems reduce handoffs by chaining actions humans typically coordinate: pulling context, updating records, triggering workflows, and following up until a task is complete.

This is why “autonomous support systems” can feel like a step-change for service teams, work moves even when people aren’t actively pushing it. -

More coverage

Autonomous systems can continuously monitor queues, catch exceptions early, and keep processes from stalling. In practice, the impact shows up as fewer aged tickets, fewer “forgotten” follow-ups, and less backlog growth during peaks. -

Data leverage

Autonomous systems rely heavily on signals like policies, labeled datasets, evaluation benchmarks, and ground -truth validation data. If those signals are messy, the system can scale the wrong behaviour faster. That’s why foundations like AI Annotations and Image Annotation matter more as you move from “assist” to “act.” -

Bigger blast radius

When AI only suggests, the blast radius is limited. When AI acts, the potential impact of mistakes grows. The new risk category is operational: updating the wrong record, triggering the wrong workflow, sending the wrong message, or compounding small mistakes across steps.

That’s why production autonomy needs guardrails, stop rules & audit trails. -

New governance

Teams adopting autonomous AI end up measuring different things: tool-call success rates, escalation rates, recovery from failures, and end-to-end completion, alongside classic quality metrics. Observability becomes a core product requirement because without logs, you can’t debug, improve, or prove compliance.

As organizations move toward autonomous AI systems, the quality of training data becomes significantly more important. Autonomous systems rely on labeled datasets, evaluation benchmarks, and ground-truth validation to make reliable decisions across workflows.

Platforms like Taskmonk help organizations build scalable data pipelines for AI development through high-quality AI annotation and image annotation services. These datasets support more reliable model behavior, which becomes critical when AI systems begin executing actions rather than simply generating suggestions.

Key features of Autonomous AI Agents

Autonomous AI agents aren’t defined by one model or one prompt. They’re defined by the capabilities around the model that make execution reliable: planning, tool use, self-checks, and guardrails.

Here are the core features to look for if you’re evaluating agents for real production workflows.

- Goal-driven execution with stop rules: Agents should understand what “success” means, keep moving toward it, and stop cleanly when they hit a blocker. The difference between useful autonomy and chaos is explicit stop conditions: halt, ask, escalate, or handoff.

- Planning + task decomposition: Before acting, agents break the goal into steps, identify missing inputs, and decide an execution route. This planning makes behavior predictable, auditable, and easier to debug when something goes wrong.

- Tool use and orchestration across systems: Real agents don’t just generate text; they call tools: CRMs, ticketing systems, internal dashboards, databases, and APIs. Orchestration means sequencing tool calls correctly, handling errors, and maintaining state across steps.

- Step-level evaluation and self-checking: After each action, agents need validation: did the step succeed, did it move closer to the goal, did it violate constraints? This can be rule checks, evaluators, or comparisons against ground truth. Without this, agents can drift into incorrect decisions.

- Guardrails, permissions, and observability: Agents must operate inside boundaries: least-privilege access, approval gates for risky actions, policy constraints, and complete audit logs. Observability is what lets teams monitor performance, investigate failures, and govern autonomy safely at scale.

Autonomous AI vs. other types of AI

Autonomous” is often used loosely, so it helps to be explicit about what counts as autonomous—and what doesn’t.

The difference is not mere intelligence but execution and ownership of workflow steps.

-

Rules-based automation vs autonomous AI

Rules-based automation follows pre-defined if/then logic. It’s predictable, fast, and easy to govern, but it breaks down when inputs are messy or when the workflow requires judgment. Autonomous AI systems can handle variability because they can interpret context, choose a path, and adapt to unexpected events. The trade-off is control: you need guardrails, approvals, and observability because the system isn’t just executing a script. -

Traditional ML models vs autonomous AI systems

Traditional ML is great at narrow tasks: classification, forecasting, ranking, and anomaly detection. It typically outputs a score or label, and a human or separate system decides what to do next.

Autonomous AI systems go further: they use predictions as signals, then plan and execute actions through tools. In practice, ML helps decide what is likely true; autonomous AI tries to decide what to do about it, and then does it. -

LLM chatbots vs autonomous AI agents

A chatbot is primarily conversational: it answers questions, drafts content, and provides information to support humans.

An autonomous AI agent is operational: it takes a goal, calls tools, updates systems, and validates outcomes. Chatbots can be “smart” but still passive. Agents are designed to be active, which is why they require scoped permissions, step-level checks, and audit trails. -

Copilots vs autopilots

Copilots assist. They suggest next steps, generate drafts, and help humans move faster—but humans stay responsible for execution.

Autopilots (or autonomous AI systems) can take ownership of execution for bounded workflows and escalate when needed. This is the practical distinction: if the AI only recommends, it’s a copilot; if it can complete an end-to-end workflow within guardrails, it’s closer to autopilot. -

Agentic AI vs autonomous AI systems

“Agentic AI” usually describes behaviour such as planning, tool use, and multi-step reasoning.

An autonomous AI system describes the full production setup: agentic behaviour plus integrations, permissions, evaluation, monitoring, and governance. You can have agentic AI in a prototype. You have autonomous AI systems when they’re reliable enough to run real work with accountability.

Benefits of autonomous AI

Autonomous AI systems create value when they’re applied to the right kind of work: repeatable, tool-driven workflows where humans spend most of their time coordinating steps across systems.

When that fit is right, and guardrails are in place, the benefits are tangible.

-

Faster execution

Autonomous AI agents can chain steps that usually require multiple handoffs: gather context, update records, trigger workflows, and follow up until closure. This reduces cycle time in workflows like support resolution, onboarding, reconciliation, and internal service requests. The outcome is less “AI answered faster” and more “the work finished sooner.” -

Higher throughput

Because autonomous AI systems can handle routine steps at scale, teams can process more volume without growing headcount linearly. Humans shift from doing every step to handling exceptions, approvals, and edge cases. This is where autonomy becomes an operational capacity multiplier, not just a productivity tool. -

Better coverage

Autonomous systems don’t get tired or forget follow-ups. They can monitor queues continuously, chase pending tasks, and keep workflows from stalling, especially during spikes or outside business hours. For many orgs, this shows up as smaller backlogs, fewer aged tickets, and fewer “dropped” tasks. -

More consistency

In policy-heavy workflows, autonomous AI can apply the same checks every time: required fields, compliance steps, escalation thresholds, and documentation standards. Humans vary under pressure; systems are consistent by default. The benefit is fewer process misses, provided the underlying rules, evaluation checks, and tool permissions are designed correctly.

However, consistent AI behavior depends heavily on high-quality labeled data and evaluation datasets. Poorly annotated training data can cause autonomous agents to apply rules incorrectly or scale mistakes across workflows.

Solutions like Taskmonk provide enterprise-grade AI annotation and data labeling services, helping organizations build reliable training datasets for machine learning and autonomous AI systems. Strong data foundations ensure agents apply policies correctly and maintain consistent performance across real-world operations.

Conclusion

Autonomous AI systems are less about “smarter models” and more about shifting AI from output to execution. When an agent can plan, act through tools, validate results, and escalate safely, you unlock faster workflows, higher coverage, and more consistent execution.

AI won’t stay confined to chat windows. Over the next few years, the biggest wins will come from self-guided intelligence embedded inside workflows—agents that don’t just recommend what to do, but reliably do it within clear boundaries.

As autonomy expands, governance will stop being optional. Observability, permissions, evaluation datasets, and audit trails will become baseline requirements, not “enterprise extras.”

However, autonomous systems are only as reliable as the data used to train and evaluate them. High-quality labeled datasets, evaluation benchmarks, and ground-truth validation play a critical role in ensuring AI agents behave predictably in real-world workflows.

Solutions like Taskmonk help organizations build these data foundations through scalable AI annotation and image annotation services, enabling teams to train models that power dependable autonomous AI systems.

As enterprises continue to integrate AI into operational infrastructure, the organizations that succeed will be those that pair intelligent systems with strong data pipelines, governance, and continuous evaluation.

FAQs

-

What are autonomous AI systems, and how do they differ from traditional AI?

Autonomous AI systems are goal-driven systems that can plan, take actions via tools, check results, and iterate until a task is done or escalated. Traditional AI usually produces a single output (a prediction, label, or summary). The key difference is execution: autonomous AI systems can complete workflows end-to-end, not just recommend steps. That’s why guardrails and observability matter more. -

What technologies power autonomous AI systems?

Autonomous AI systems typically combine an LLM with retrieval (for context), a planning layer, tool integrations, and step-level evaluation. The tool layer connects to apps like CRMs, ticketing tools, and databases. Guardrails include least-privilege permissions and human-in-the-loop gates for high-risk actions. Observability (logs, traces, outcomes) is essential for debugging and governance. -

What are the main applications of autonomous AI in different industries?

Common applications include customer support automation, IT helpdesk, finance ops (reconciliation and exception handling), sales ops (CRM updates and follow-ups), and operations/logistics (monitoring and workflow triggers). Autonomous AI agents work best in repeatable, tool-driven processes with clear policies. In regulated industries, autonomy is usually bound with approvals and audit trails. -

How do autonomous AI systems make decisions without human input?

They follow a loop. The system retrieves relevant context, selects the next best step, executes through tools, and validates outcomes using rules or evaluators. If confidence drops or risk increases, it escalates. So “decision-making” is mostly controlled step selection plus validation, not free-form autonomy. -

What are the key benefits of implementing autonomous AI systems?

Key benefits include faster cycle times, higher throughput, fewer workflow handoffs, and better coverage for queues and repetitive processes. Autonomous AI systems can also enforce policies more consistently when guardrails are clear. The biggest gains show up when tool access is well-scoped, and evaluation is strong, so the agent avoids confident wrong actions.

.png)